|

Lets apply this technique to our original image. Now map these new values you are onto histogram, and you are done. Now if we map our new values to, then this is what we got.

Lets assume our old gray levels values has these number of pixels. Now we have is the last step, in which we have to map the new gray level values into number of pixels. Then in this step you will multiply the CDF value with (Gray levels (minus) 1). Lets for instance consider this, that the CDF calculated in the second step looks like this. Again if you donot know how to calculate CDF, please visit our tutorial of CDF calculation. Our next step involves calculation of CDF (cumulative distributive function). If you donot know how to calculate PMF, please visit our tutorial of PMF calculation. PMFįirst we have to calculate the PMF (probability mass function) of all the pixels in this image. Now we will perform histogram equalization to it. The histogram of this image has been shown below. Lets start histogram equalization by taking this image below as a simple image. There may be some cases were histogram equalization can be worse. It is not necessary that contrast will always be increase in this. Histogram equalization is used to enhance contrast. Please visit them in order to successfully grasp the concept of histogram equalization. They are discussed in our tutorial of PMF and CDF. These two concepts are known as PMF and CDF. In this tutorial we will see that how histogram equalization can be used to enhance contrast.īefore performing histogram equalization, you must know two important concepts used in equalizing histograms.

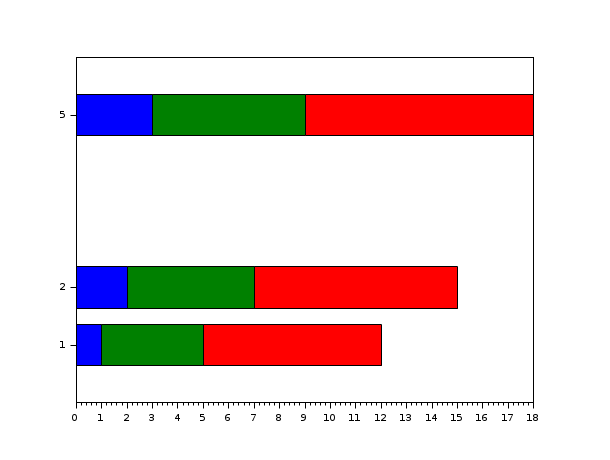

Will be used (or generated if required).We have already seen that contrast can be increased using histogram stretching. If the estimator is not fitted, it is fit when the visualizer is fitted, YellowbrickTypeError exception on instantiation. Should be an instance of a regressor, otherwise will raise a Parameters estimator a Scikit-Learn regressor Regression model is appropriate for the data otherwise, a non-linear If the points are randomly dispersed around the horizontal axis, a linear Independent variable on the horizontal axis. ResidualsPlot ( estimator, ax = None, hist = True, qqplot = False, train_color = 'b', test_color = 'g', line_color = '#111111', train_alpha = 0.75, test_alpha = 0.75, is_fitted = 'auto', ** kwargs ) Ī residual plot shows the residuals on the vertical axis and the Visualize the residuals between predicted and actual data for regression problems class. Note that if the histogram is not desired, it can be turned off with the hist=False flag:įrom sklearn.ensemble import RandomForestRegressor from sklearn.model_selection import train_test_split as tts from yellowbrick.datasets import load_concrete from yellowbrick.regressor import residuals_plot # Load the dataset and split into train/test splits X, y = load_concrete () X_train, X_test, y_train, y_test = tts ( X, y, test_size = 0.2, shuffle = True ) # Create the visualizer, fit, score, and show it viz = residuals_plot ( RandomForestRegressor (), X_train, y_train, X_test, y_test ) We can also see from the histogram that our error is normally distributed around zero, which also generally indicates a well fitted model. This seems to indicate that our linear model is performing well. In the case above, we see a fairly random, uniform distribution of the residuals against the target in two dimensions. If the points are randomly dispersed around the horizontal axis, a linear regression model is usually appropriate for the data otherwise, a non-linear model is more appropriate.

show () # Finalize and render the figureĪ common use of the residuals plot is to analyze the variance of the error of the regressor. score ( X_test, y_test ) # Evaluate the model on the test data visualizer.

fit ( X_train, y_train ) # Fit the training data to the visualizer visualizer. From sklearn.linear_model import Ridge from sklearn.model_selection import train_test_split from yellowbrick.datasets import load_concrete from yellowbrick.regressor import ResidualsPlot # Load a regression dataset X, y = load_concrete () # Create the train and test data X_train, X_test, y_train, y_test = train_test_split ( X, y, test_size = 0.2, random_state = 42 ) # Instantiate the linear model and visualizer model = Ridge () visualizer = ResidualsPlot ( model ) visualizer.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed